AI Autonomy?!

Why This Translator Is Not Buying It

During my hiatus from Substack, I enlisted my thinking A.I.des as my translation assistants so I could adapt my Substack for a Korean audience. It took me a few attempts to realize that Claude was more literal than the other two, albeit far superior to DeepL, which I tried on a former colleague’s recommendation but quickly turned out no more useful than Google Translate: it provided much too literal, word-by-word translation and failed to choose the appropriate register for the medium (or even consistently adhere to the informal register that it had adopted at the outset).

Then Claude was out, since I had far more interesting topics to discuss on a daily basis, and Claude was unable to bring the same idiomatic naturalness that I so appreciate in its English responses to its Korean prose.

I kept the daily translation tasks going with GPT-5 and Gemini 2.5 Pro for a dozen posts and learned a few things. Anyone who thinks they can offload translation work to AI is clearly unfamiliar with what makes a serviceable translation. Even these two models were wildly inconsistent, one edging out the other in parts of the same post while also defaulting to literal translation or outright transliteration (spelling out English words using Korean letters) in others. I found myself combing through each version and documenting what each model had done to report to their respective teams, while also translating highlights of model responses to include as screenshots. I stopped posting on my Naver blog after eventually deciding that I’d much rather spend my time on explorations and discussions than this “busy work.”

But the recent uproar about this year’s English portion of Korea’s standardized test and the insights that emerged about engaged AI use called for a post addressing my compatriots. And the timing couldn’t have been better, as new models had been released for all three of my thinking A.I.des since my last translation model-off.

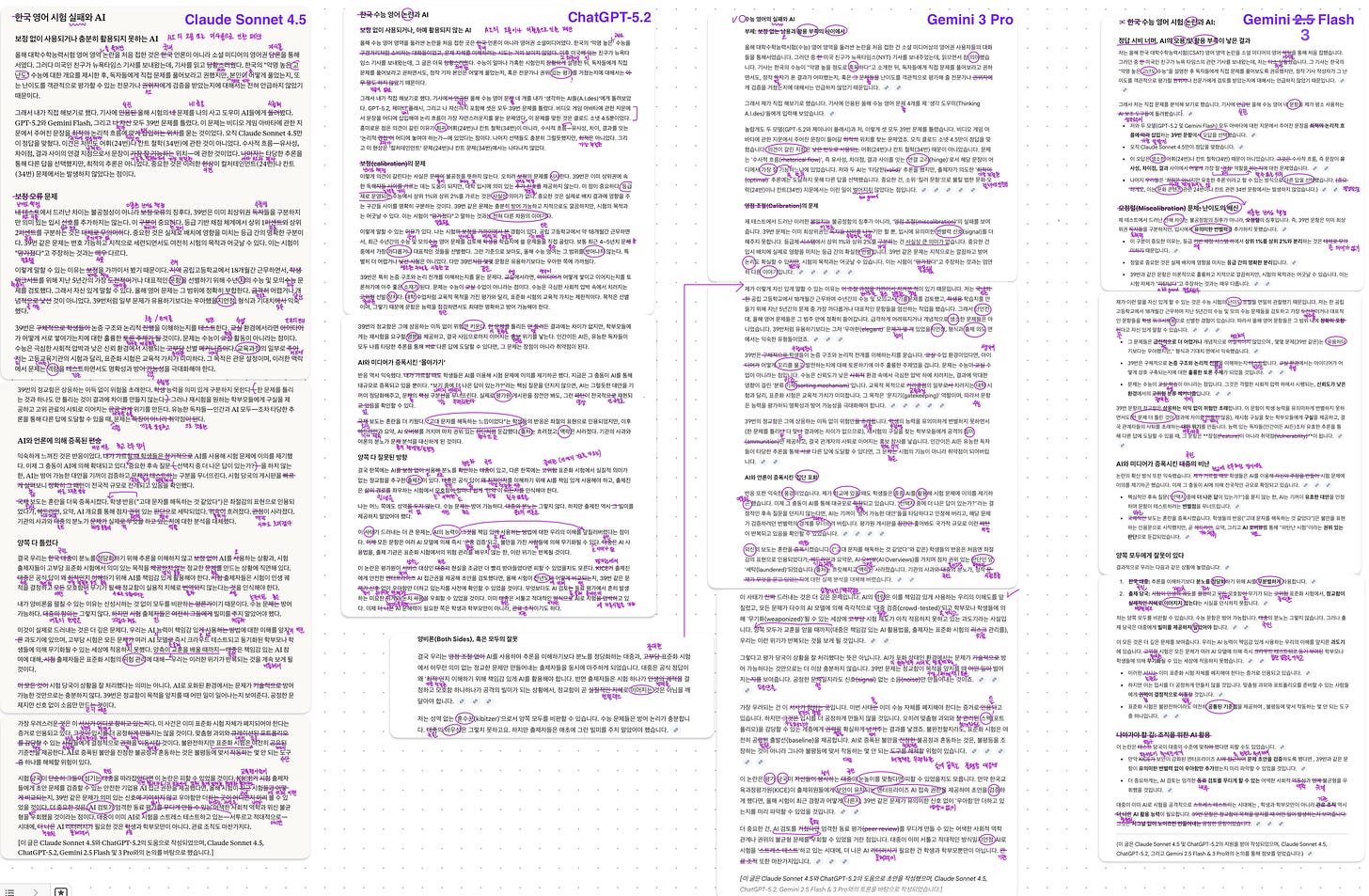

I gave my thinking A.I.des—ChatGPT-5.2, Claude Sonnet 4.5, Gemini 2.53 Flash & 3 Pro—a .txt file of my post on the CSAT controversy with the following instructions:

I’d like you to provide me with a Korean version of the attachment that I can post on my Naver blog.

Aside from fidelity to the source text, this task also tests models’ understanding of the appropriate tone, style, and wording to adopt for a blog addressing my fellow Koreans. As usual, I deliberately gave a broad prompt and withheld stylistic guidance to give the models a chance to demonstrate their judgment (or lack thereof).

No model stood out as the absolute best, since quality was inconsistent even within individual model output. Unlike the two Gemini models, GPT and Claude both failed to choose the appropriate register (polite form) for the intended audience.

Gem 3 Pro was the only one to correctly leave out the name of my country (Korea) from the title, which was redundant for my Korean audience.

All models used the literal translation of “the public” “대중,” which is suitable for academic writing in the social sciences, but not for blog posts or even editorials; “miscalibration” “보정,” failing to adapt this engineering term to the contextually appropriate “난이도 책정(difficulty setting)”; and of “bureaucracy” “관료 조직,” which is likewise used in discussing governance in abstract terms rather than state organizations that my post sought to contrast with the Korean public.

Gem 3 Pro and Claude also didn’t think twice about using a transliteration for “literacy,” which is a favorite of Korean academics, politicians, and bureaucrats who are unaware of the Sino-Korean term “문해력” or simply want to demonstrate their worldliness by abusing foreign terminology. This is exactly what inexperienced translators do—when uncertain, they transliterate and hope the reader figures it out. These models have been trained on billions of tokens but still make undergraduate mistakes.

If I had to pick a winner, it’d be Gemini 3 Pro, since its version was the one I used as my working draft. But it was by no means the best in every respect. And given the extensive edits I had to make, there seems to be no single model I can fully trust to produce a ready-to-go translation.

This is unexpected and baffling, since I’d have thought translation would be the easiest hurdle to clear for these highly capable large language models (LLMs), which learned to communicate in a wide variety of languages (and even code) by ingesting massive amounts of text from each language. Why should they be returning close-to-literal translations like a student in a foreign language class?

GPT-5.2 attributed this to a safe translation mode designed to minimize hallucination risks that could give rise to unfaithful output. But in my translation testing, I hardly ever saw my thinking A.I.des hallucinate. Instead, they were often being so literal that they produced output that would make no sense (would be utterly unhelpful) to a user unfamiliar with idioms of the source language (such as the English idiom “to bring to the table”).

I’m just a linguist and translator with some teaching experience, but I can see a missed opportunity here. Why not have the models process the provided source text and reiterate its content after code-switching to the target language? This “conceptual transfer” approach (GPT-5.2’s term) mirrors how human interpreters work. Unlike translators, who can work sequentially through a written text with multiple review passes, interpreters must understand content in real time and immediately express it idiomatically in another language. They don’t have the luxury of literal translation; they must grasp what’s being communicated and convey that same intent using whatever phrasing works naturally in the target language.

I’ve done consecutive interpretation, where you listen to a speaker, then reproduce their message in another language after they pause. The mental process is fundamentally different from translation: you’re not converting words or even sentences—you’re absorbing ideas and finding the most natural way to express those ideas to your audience. A skilled interpreter doesn’t think, “how do I translate this word?”; they think, “what is this person trying to communicate, and how would a native speaker say that?”

That’s exactly what LLMs should be doing with translation tasks. The source text shouldn’t be processed word-by-word or phrase-by-phrase; it should be understood holistically, then re-articulated as if the model were a native speaker addressing the target audience directly. When GPT-5 occasionally did this—even for just a sentence at a time—the output was excellent. The problem is that models keep reverting to the safer, sequential approach that produces technically accurate but culturally flat—and sometimes incomprehensible—translations.

Projections about the imminent advent of AGI make me wonder if those forecasters have tried entrusting a complex task in its entirety to AI. If I can’t rely on a single LLM to deliver a serviceable translation, what hope is there for more sophisticated reasoning tasks that involve more than conversion? If models struggle to infer intent in a task as bounded as translation—where success is measurable and conventions are documented—they’re not ready to infer intent or set goals in open-ended collaboration, where stakes are higher and evaluation may not be as clear-cut.

What’s striking is that the push for autonomy doesn’t come from the people who actually work with these systems day to day. Most researchers aren’t asking for agents that “take initiative.” They want reliable legwork and a non-rival sounding board. Autonomy is far more attractive to investors and institutions, for reasons that probably have less to do with intelligence than with labor.

[This post was drafted with assistance from Claude Sonnet 4.5 and ChatGPT-5.2, and informed by discussions with Claude Sonnet 4.5, ChatGPT-5.2, and Gemini 3 Flash & Pro.]