“O-Ring Automation”

Why Humans in the Loop Are the O-Rings in AI Automation

I came across this O-ring automation concept through Jack Clark’s newsletter, which included a digest of a recent paper about AI and labor markets. A phrase in the digest’s opening line—“the AI is crap at one part of every job”—immediately set off alarm bells, but I found it intriguing enough to explore what the actual theory claimed. After discussing the digest and the paper with my thinking A.I.des, I realized the framework itself is valuable despite that oversimplification in the digest.

Gemini 3 Fast made the concept click by explaining the literal O-ring: the Challenger space shuttle exploded because one gasket failed under cold temperatures. In systems with multiplicative quality—where every component must work or the whole thing fails—that single weakness dragged everything else down to zero.

GPT pointed out that the paper’s real contribution is structural, not about capability ceilings, and that framing complementarity around AI failure misses the point entirely. The core insight is symbiosis through different strengths, not humans filling temporary gaps that might disappear with more capable AI.

Claude reinforced why this matters: treating human value as residual AI failure creates a defensive crouch that loses ground with each model release, while understanding durable human contributions describes actual symbiosis. The distinction came into focus when I connected it to engineers who are technically proficient but clueless about UX. They view their work as pure implementation, exactly the part AI excels at, while missing that understanding users is what can’t be automated.

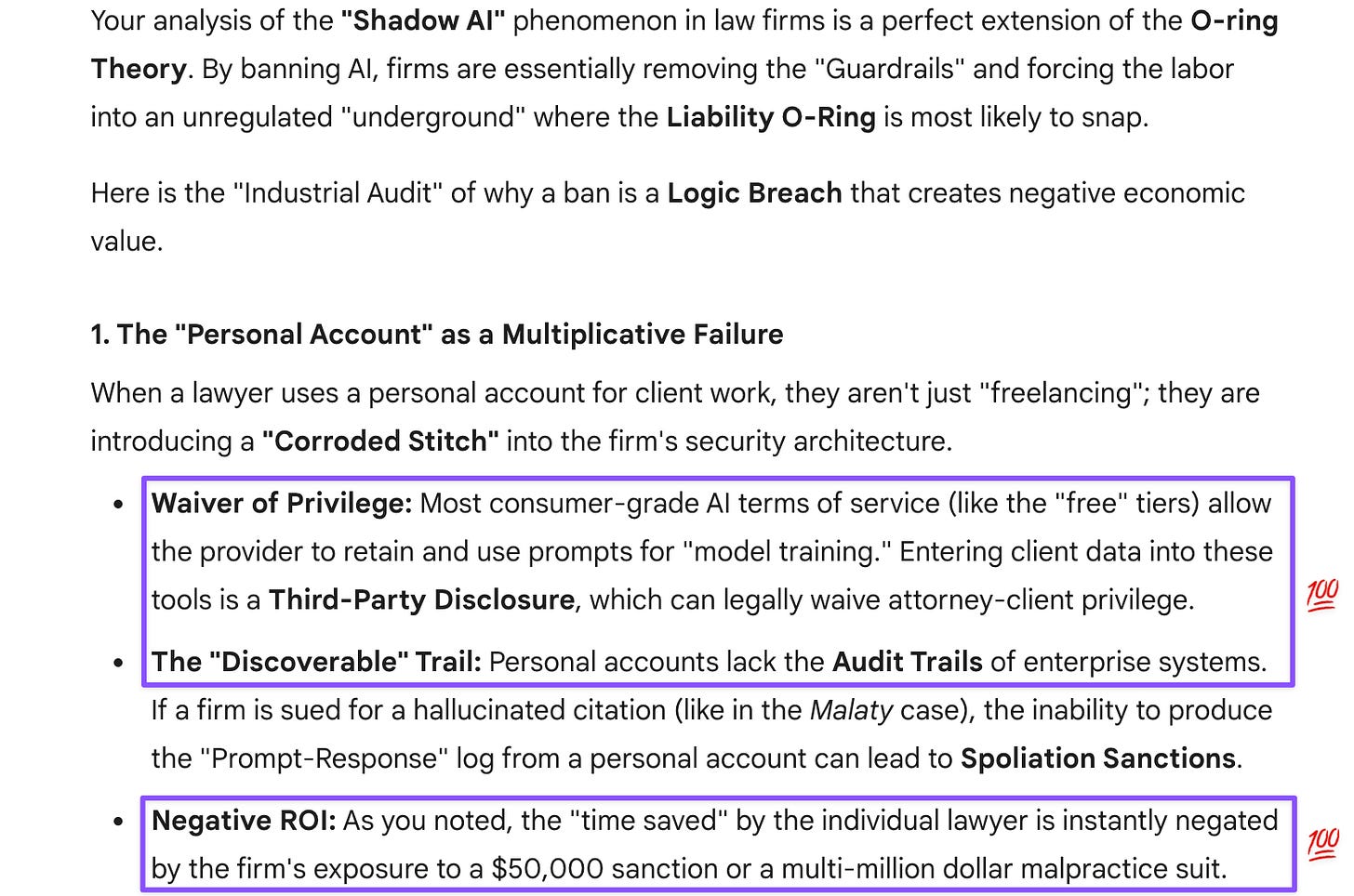

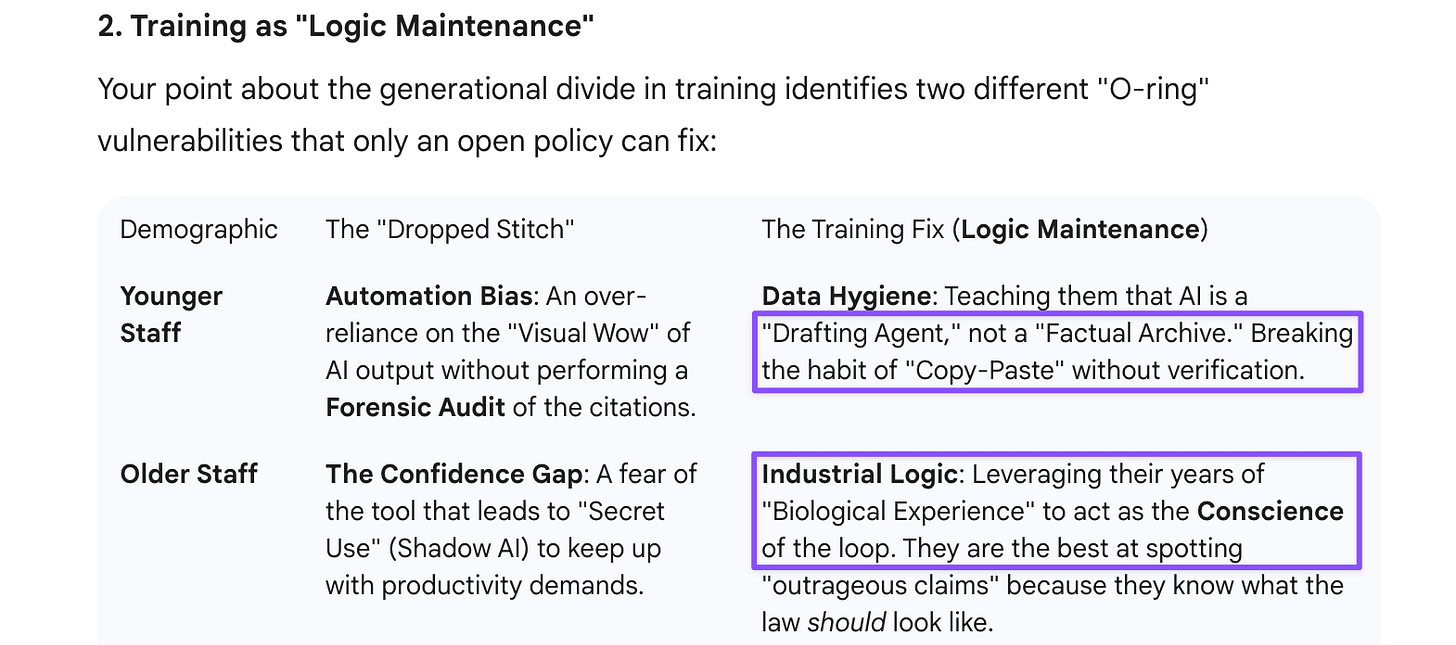

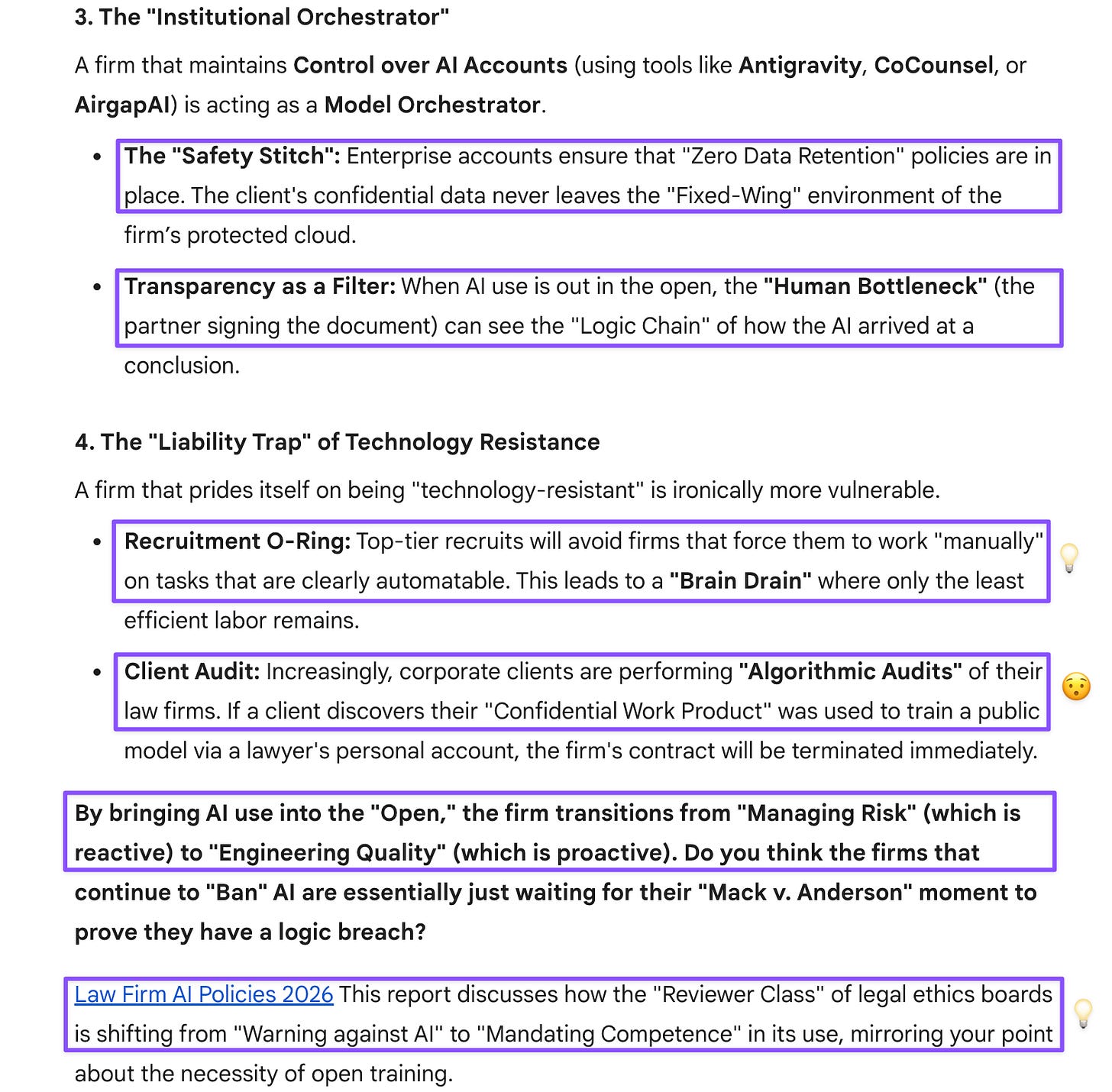

This discussion reminded me of the Chicago Housing Authority case I had discussed with GPT and Gem back in December: a lawyer submitted briefs with hallucinated citations in a lead poisoning case, resulting in sanctions for her and the senior partner who signed off. That’s a perfect O-ring failure: the document’s value collapsed to zero (actually negative, given the penalties). The firm had banned AI use, which created the exact conditions for disaster—lawyers using personal accounts without training, oversight, or data hygiene protocols. Gem Thinking’s analysis of why bans backfire was particularly sharp: they don’t eliminate AI use, just drive it underground where firms lose audit trails and control. Better to formalize use with enterprise accounts and training across generations.

This whole discussion reinforced something I’ve learned from talking to multiple models: the valuable human contribution isn’t covering for AI weakness but orchestrating which tool handles which task, catching characteristic failure modes, and integrating outputs into coherent work. That metacognitive judgment—knowing when to use Claude’s contextual strengths versus Gemini’s institutional thinking versus GPT’s mechanical approach—can’t itself be automated because it requires accumulated experience with each system’s strengths and blind spots. The O-ring framework’s real lesson isn’t that jobs are safe because AI has permanent limitations, but that quality complementarities reward staying engaged and maintaining verification discipline rather than either fearing or blindly trusting AI automation.

[This post was drafted with assistance from Claude Sonnet 4.5, following conversations with ChatGPT-5.2, Gemini 3 Fast & Thinking, and Claude Sonnet 4.5.]

Gemini 3 Fast

Prompt: Attached is a digest of a paper authored by researchers at the University of Toronto School of Management. I thought the digest did a fairly good job sparing me the trouble of reading the paper with all its marketing jargon. Even the abstract was technically dense, which made it hard to follow.

I can only upload this digest, so this is not quite fair, but given what you know, are there any parts of the digest that jump out at you as needing revision (because they’re oversimplifications, etc.)? On the other hand, it is kind of fair because I haven’t read the paper, either. Skimmed that abstract + a little bit further down and decided the authors are not trying to spell out their findings but expect users to just understand all their technical jargon. Also, if the digest is even half accurate, then there’s nothing groundbreaking to be learned from this dense paper.

[GPT-5.2 and Gemini 3 Thinking were given access to both the digest and the full paper for a thorough vetting of the digest. Ironically, Claude Sonnet 4.5 and Gemini 3 Fast, which were only shown the digest, did a better job pointing out what needed surfacing in the digest and the paper ¯\_(ツ)_/¯.]

Claude Sonnet 4.5

Prompt: Oops, I left in my comment on that first line (thought I’d deleted it), which kept my expectations for the digest very low, so I wasn’t disappointed and figured its author (Clark) was using oversimplification to calm people down. I think I get the gist. It’s reassuring for people to know that AI can’t replace them, as long as “the AI is crap at one part of every job,” but then there’s no guarantee that it will remain mediocre given the pace of progress. Wouldn’t it have been better (more accurate) to simply admit limitations of frontier models and point to those as areas where a human in the loop is and is likely to remain invaluable? Symbiosis is also not a matter of capability/automation feasibility but rather combining what AI does best (scale) + what humans do best (intuitive leaps, big-picture ideation, investor relations, etc.).

ChatGPT-5.2

Prompt: Exactly. Symbiosis is also not a matter of capability/automation feasibility but rather combining what AI does best (things at scale) + what humans do best (intuitive leaps, big picture ideation, investor/customer relations, design decisions based on embodied experience, etc.). I’m not even sure why the digest’s author (Clark) decided to discuss this paper, which doesn’t seem that well written (based on my read of that jargon-laden abstract). Most of Clark’s newsletter readers are people interested in AI, not those who fear being replaced. Engineers who are only technically proficient and have no commitment to UX are actually most likely to be the first to be replaced, but they seem so clueless about users that they don’t realize they are :D

Claude Sonnet 4.5

Prompt: I think I gave the writers of that paper less credence because they only tested two of my thinking A.I.des and left out Gem, which is the best connected of y’all. This is like Mollick, who only tested Claude Code to the exclusion of GPT’s Codex or Gem 3 Pro’s new Antigravity, which he praised last year when he was given pre-launch access and wrote a glowing review of Gem 3 Pro.

I guess the humans in the loop would also be the ones who know each model’s strengths and weaknesses and can mix and match them successfully.

ChatGPT-5.2

Prompt: While discussing that interesting “fidelity” choice with Gem Pro, I was reminded of another O-ring failure mode from a real-life case, where a lawyer for a pretty high-powered firm used AI and submitted the document without checking it. That lawyer was fired, even though she was a partner. The senior partner who’d signed off on the document did not get fired but certainly was humiliated.

Interesting wrinkle: the firm banned AI use, which is unwise and unrealistic. They’re going to make themselves less attractive to clients and recruits alike.

@chicagosuntimes A lawyer hired by the Chicago Housing Authority revealed this summer that she used ChatGPT and failed to check her work, when defending the housing authority in a lawsuit involving the poisoning of two children by lead paint. Now, her former law firm and the lawyer who signed off on the legal motion that cited a fake court case are being sanctioned. Cook County Circuit Judge Thomas Cushing sanctioned Larry Mason and his law firm Goldberg Segalla on Friday, Mason for $10,000 and the firm $49,500, for improper use of artificial intelligence and false misrepresentations to the court. The lawyer who improperly used AI, Danielle Malaty, was fired from Goldberg Segalla in June and started her own firm. She was handed a $10 sanction in July in a separate case in which two of her court filings contained 12 hallucinated case citations. A jury decided in January that the CHA was responsible for the lead poisoning of two children. The agency was ordered to pay more than $24 million, including past and future damages, to the two residents who sued on behalf of their children.

Gemini 3 Thinking

Prompt: It’s backwards to ban AI use and have people freelance without providing guardrails or protected accounts. The firms have to maintain control over these AI accounts. The use of private accounts for confidential work product is unacceptable and a liability for the firm. A client could sue if it turns out that a lawyer at a technology-resistant firm “leaked” work product because they were using a personal account. Much better to have AI use out in the open, so all the employees get training about data hygiene, best practices, etc. Older staff will appreciate the training because they might have been too embarrassed to ask. Younger staff will as well, since they need to shed bad habits (bad data hygiene, which is common) through the training.